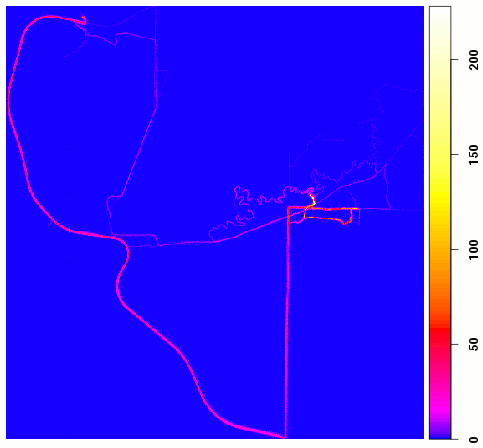

Location map

A couple years ago we got iPhones, and one of my favorite apps is the RunKeeper app, which tracks your outdoor activities using the phone’s built-in GPS. When I first started using it I compared the results of the tracks from the phone to a Garmin eTrex, and they were so close that I’ve given up carrying the Garmin. The fact that the phone is always with me, makes keeping track of all my walks with Nika, and trips to work on my bicycle or skis pretty easy. Just like having a camera with you all the time means you capture a lot more images of daily life, having a GPS with you means you have the opportunity to keep much better track of where you go.

RunKeeper records locations on your phone and transfers the data to the RunKeeper web site when you get home (or during your trip if you’ve got a good enough cell signal). Once on the web site, you can look at the tracks on a Google map, and RunKeeper generates all kinds of statistics on your travels. You can also download the data as GPX files, which is what I’m working with here.

The GPX files are processed by a Python script that inserts each point into a spatially-enabled PostgreSQL database (PostGIS), and ties it to a track.

Summary views allow me to generate statistics like this, a summary of all my travels in 2010:

| Type | Miles | Hours | Speed |

| Bicycling | 538.68 | 39.17 | 13.74 |

| Hiking | 211.81 | 92.84 | 2.29 |

| Skiing | 3.17 | 0.95 | 3.34 |

Another cool thing I can do is use R to generate a map showing where I’ve spent the most time. That’s what’s shown in the image on the right. If you’re familiar at all with the west side of the Goldstream Valley, you’ll be able to identify the roads, Creek, and trails I’ve been on in the last two years. The scale bar is the number of GPS coordinates fell within that grid, and you can get a sense of where I’ve travelled most. I’m just starting to learn what R can do with spatial data, so this is a pretty crude “analysis,” but here’s how I did it (in R):

library(RPostgreSQL)

library(spatstat)

drv <- dbDriver("PostgreSQL")

con <- dbConnect(drv, dbname="new_gps", host="nsyn")

points <- dbGetQuery(con,

"SELECT type,

ST_X(ST_Transform(the_geom, 32606)) AS x,

ST_Y(ST_Transform(the_geom, 32606)) AS y

FROM points

INNER JOIN tracks USING (track_id)

INNER JOIN types USING (type_id)

WHERE ST_Y(the_geom) > 60 AND ST_X(the_geom) > -148;"

)

points_ppp <- ppp(points$x, points$y, c(min(points$x), max(points$x)), c(min(points$y), max(points$y)))

Lab.palette <- colorRampPalette(c("blue", "magenta", "red", "yellow", "white"), bias=2, space="Lab")

spatstat.options(npixel = c(500, 500))

map <- pixellate(points_ppp)

png("loc_map.png", width = 700, height = 600)

image(map, col = Lab.palette(256), main = "Gridded location counts")

dev.off()

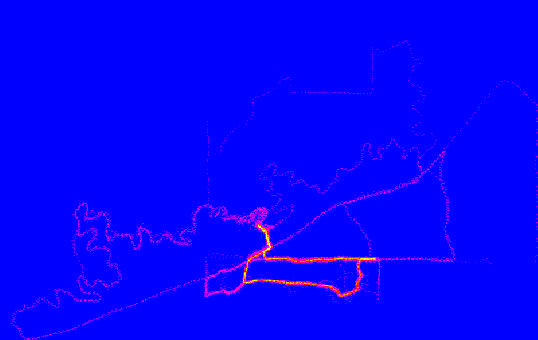

Here’s a similar map showing just my walks with Nika and Piper:

Walks with Nika and Piper

And here's something similar using ggplot2:

library(ggplot2) m <- ggplot(data = points, aes(x = x, y = y)) + stat_density2d(geom = "tile", aes(fill = ..density..), contour = FALSE) m + scale_fill_gradient2(low = "white", mid = "blue", high = "red", midpoint = 5e-07)

I trimmed off the legend and axis labels:

ggplot2, geom_density2d

Overflow near DNR pond

I took Friday off from work again this week and brewed Overflow Old Ale. I drained the chilled wort directly onto the yeast cake from last week’s brewing effort, so I should get a nice fast start from the yeast. The recipe was supposed to be a high alcohol (7%) lightly hopped ale, but instead of an expected 70% efficiency at extracting sugars from the grain, I wound up at 57%. That’s the lowest efficiency I’ve ever gotten. I wouldn’t mind so much if they were consistent, but they’re all over the map, and I don’t know what is causing the variation. Same base grains, same procedure and equipment, same thermometer, etc. It’s a mystery.

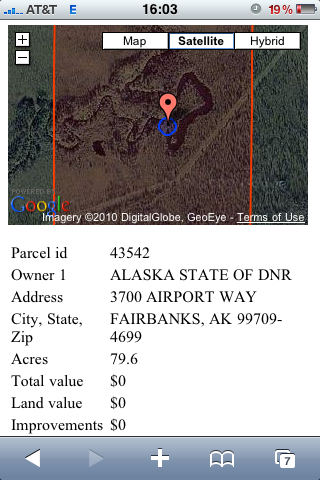

Location of photo

The good news is that nothing went wrong, and beer is beer regardless of whether it turned into the batch you were planning to brew. I’m sure I’ll enjoy drinking it at least as much as I did brewing it.

The batch is named for the overflow that’s currently moving across the top of the frozen Creek. I took advantage of it and used it in my counterflow chiller to bring the boiling wort down to pitching temperatures in 10–15 minutes. The water is at least a foot deep, and I don’t think it’s been warm enough for it to be a natural phenomenon. Last year’s overflow event was rumored to be a large discharge from the mine several miles upstream from our house, and this event looks similar. Despite providing chiller water, it’s a drag because it’ll take at least a week for it to freeze solid again and until it snows it’ll be treacherous to walk on. I had hoped the overflow would have flooded the DNR pond again, as it was really fun ice skating on it last year, but the floodwaters aren’t high enough to cross onto the pond. The photo at the top is taken where the Creek passes the pond.

The photo on the right shows the Google Maps image of the location I took the photo using the iPhone web app I wrote. This morning I added some code to draw a blueish buffer around the GPS location that’s sized using the reported GPS accuracy. It also scales the map so that the entire property fits on the screen (I zoomed in before taking the photo on the right). I think the app is pretty much finished, except I want to add Fish and Game Management Units to it. Unfortunately, they don’t have GIS files for the smaller Management Areas, so it won’t be as useful as it could be. You can see I’ve drained my battery playing with it!

Updated web app

http://swingleydev.com/gis/loc.html

(for GPS enabled phones within the Fairbanks North Star Borough)

Now that my two year web hosting contract with BlueHost is coming up, I’ve decided to migrate over to Linode. It’s about twice as expensive, but you get your own Xen virtual machine to do with as you want. With BlueHost I wound up needing to compile a whole bunch of software packages (gnuplot, remind, spatialite, a variety of Python modules, etc.) just to get my site to work. And I couldn’t upgrade PostgreSQL, which left me unable to use PostGIS. With my Linode, I put the latest Ubuntu on it, and was able to install all the software I need.

As mentioned, PostGIS is one of the benefits of this. Instead of loading the Fairbanks North Star Borough tax parcel database into spatialite and using a CGI program to extract data, I’m able to query the same data in PostGIS directly from PHP. That means I can do all sorts of cool stuff with the data I’m extracting.

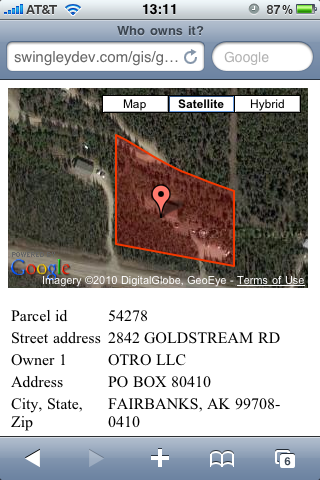

Here, I am using the Google Maps API to draw the property polygon and put a marker at the location from the iPhone’s GPS. You can see the result on the right, taken while eating lunch at the office. If you’ve got a GPS-enabled smart device and you’re in the Fairbanks North Star Borough, the link is http://swingleydev.com/gis/loc.html.

The code that drives the map is at the bottom of this post. The complicated query in the middle of it gets the outermost ring from the polygon, and dumps each vertex as a separate row. This section is in the middle of the Google Maps JavaScript that builds the overlay polygon. Note that this doesn’t handle the few properties in the database that have multiple polygons in their geometry, and it ignores the holes that might be present (if a small chunk out of the middle of a property was sold, for example). I expect these cases are pretty infrequent.

One enhancement would be to include a circular buffer around the point marker so you could get a sense of where the GPS accuracy places you on the map. This is complicated with Google because of the custom projection they’re using. I think it’s called “Google Mercator,” but spatialreference.org lists several projections that look like they might be compatible. I’ll have to give those projections a try to see if I can make a projected circular buffer that looks like a circle. It would also be nice to set the map extent to match the extent of the parcel you’re inside. I don’t know if that’s part of the Google Maps API or not.

Here’s the code:

<script type="text/javascript">

function initialize() {

if (GBrowserIsCompatible()) {

var map = new GMap2(document.getElementById("map_canvas"));

var mapControl = new GMapTypeControl();

map.addControl(mapControl);

map.setCenter(new GLatLng(<?php echo $lat; ?>, <?php echo $lon; ?>), 14);

var polygon = new GPolygon([

<?php

$query = "

SELECT

ST_X(ST_PointN(ST_ExteriorRing(ST_GeometryN(wkb_geometry, 1)),

generate_series(1, ST_NPoints(ST_ExteriorRing(ST_GeometryN(wkb_geometry, 1))))))

AS x,

ST_Y(ST_PointN(ST_ExteriorRing(ST_GeometryN(wkb_geometry, 1)),

generate_series(1, ST_NPoints(ST_ExteriorRing(ST_GeometryN(wkb_geometry, 1))))))

AS y

FROM tax_parcels

WHERE ST_Intersects(wkb_geometry, ST_SetSRID(ST_MakePoint($lon, $lat), 4326));";

$result = pg_query($query);

$num_rows = pg_num_rows($result);

for ($i = 0; $i < $num_rows - 1; $i++) {

$row = pg_fetch_row($result);

list($vlon, $vlat) = $row;

echo "new GLatLng($vlat, $vlon),";

}

$row = pg_fetch_row($result);

list($vlon, $vlat) = $row;

echo "new GLatLng($vlat, $vlon)";

pg_free_result($result);

?>

], "#f33f00", 2, 1, "#ff0000", 0.2);

map.addOverlay(polygon);

var point = new GLatLng(<?php echo $lat; ?>, <?php echo $lon; ?>);

map.addOverlay(new GMarker(point));

}

}

</script>

Today I went for a walk on the Creek with Nika and Piper and it occurred to me that the iPhone ought to be able to tell me not only where I am (which it does quite well with the Google-driven map application), but on whose property I’m walking through. It took me a little time, but I now have a webapp (http://swingleydev.com/gis/loc.html) that can do this (don't bother clicking on the link unless you're on an iPhone or other GPS-enabled device).

The result is a pair of pages. The first one shows you your current location, speed, and heading. With a press of a button (and a delay while the database finds the property owner) you can see the Borough information on the property you’re currently inside (assuming you’re in the Fairbanks North Star Borough—this page doesn’t do non-Borough residents any good).

Here’s how I set it up:

My web hosting provider doesn’t have a new enough version of PostgreSQL to run PostGIS, which means I need to use the spatially-enabled version of SQLite3, called Spatialite. To get the parcel database from the shapefile to Spatialite requires the following steps. Some parcels are eliminated in this process, but it’s a small fraction of the parcels in the database:

Download the parcels shapefile:

$ wget ftp://co.fairbanks.ak.us/GIS/tax_parcels.zip

Unzip it and insert it into a spatially enabled PostgreSQL database using ogr2ogr. Crash the process immediately:

$ unzip tax_parcels.zip

$ ogr2ogr -f "PostgreSQL"

-t_srs EPSG:4326

-overwrite -skipfailures

PG:"dbname='test'"

tax_parcels.shp

Fix the column definitions:

sql> DELETE FROM tax_parcels;

sql> ALTER TABLE tax_parcels ALTER COLUMN

sqft_calc TYPE numeric (22,12);

Re-import the data:

$ ogr2ogr -f "PostgreSQL"

-t_srs EPSG:4326

-append -skipfailures

PG:"dbname='test'"

tax_parcels.shp

Convert the geometry column to MULTIPOLYGON:

sql> ALTER TABLE tax_parcels DROP CONSTRAINT "enforce_geotype_wkb_geometry";

sql> ALTER TABLE tax_parcels ADD CONSTRAINT "enforce_geotype_wkb_geometry"

CHECK (geometrytype(wkb_geometry) = 'MULTIPOLYGON'::text OR

wkb_geometry IS NULL OR

geometrytype(wkb_geometry) = 'POLYGON'::text);

sql> UPDATE tax_parcels SET wkb_geometry = ST_Multi(wkb_geometry);

sql> ALTER TABLE tax_parcels DROP CONSTRAINT "enforce_geotype_wkb_geometry";

sql> ALTER TABLE tax_parcels ADD CONSTRAINT "enforce_geotype_wkb_geometry"

CHECK (geometrytype(wkb_geometry) = 'MULTIPOLYGON'::text OR

wkb_geometry IS NULL);

sql> UPDATE geometry_columns SET type='MULTIPOLYGON'

WHERE f_table_name='tax_parcels' AND f_geometry_column='wkb_geometry';

Re-import the data (there will be thousands of errors, but this insert should add the MULTIPOLYGON rows that weren’t inserted the first time around):

$ ogr2ogr -f "PostgreSQL"

-t_srs EPSG:4326

-append -skipfailures

PG:"dbname='test'"

tax_parcels.shp

Get rid of the illegal polygons:

sql> DELETE FROM tax_parcels WHERE NOT ST_IsValid(wkb_geometry);

Convert to spatialite:

$ ogr2ogr -f "SQLite"

tax_parcels.sqlite

PG:"dbname='test'"

-dsco SPATIALITE=YES

With the data in the proper format, I built a mobile web page that can pull location information from the mobile device, display it on the screen, and pass this information to a page that finds and displays the parcel information from the Spatialite database. JavaScript handles gathering the location information, but because I don’t control the web server, I had to write a CGI script to pass a query to spatialite and format the results as a list. This CGI is called by the main page from a <form> element. The spatialite query looks like this:

sqlite> SELECT street_add, owner_firs, owner_last,

owner1, owner2, owner3, mail_add, ci_st_zip,

round(acres_calc, 1) as acres, ’$’ || total as total,

’$’ || land as land, ’$’ || improvemen as improvements,

pan, sub, block, lot, road_water, lot_size, units,

neighborho, primary_us, tax_status, tax_year, mill_rate,

business, year_built, situs_numb, situs_name

FROM tax_parcels

WHERE Intersects(GEOMETRY, SetSRID(MakePoint(lon, lat), 4326));

Again, implementing this would be a lot easier if I could install PostGIS on the server and use PHP to access the data directly. Because I can’t, spatialite and CGI do the trick.

Update: I added a few more steps to convert the initially imported POLYGON layers to MULTIPOLYGON, which then allows us to include the MULTIPOLYGON rows from the shapefile.

The company I work for (ABR, Inc.) pays it’s employees to use alternative transportation—bicycle, walk, run, ski, etc.—to get to work. It’s not a lot of money, but human behavior being what it is, even a small incentive really works. I’ve been riding my bicycle to work three or four times a week, carrying a hand-held GPS (Garmin eTrex Vista Cx) along with me and logging the data into spatially aware databases (PostGIS and spatialite). You can see a map of last week’s data on my GIS page.

One problem with my GPS is that I get really inaccurate elevation data, and as anyone who has ridden a bicycle knows, elevation changes are really important to how fast you can ride. I’ve noticed that I ride much faster going home than on my way to work, and had concluded (until examining the data…) that it must be an uphill ride to work.

But how to fix the elevation data? One approach would be to download the new global digital elevation model (DEM) that’s based on data from Japan’s ASTER satellite and use this to find the corrected elevation for GPS trackpoint. I’ll probably try this at some point just to see how well the DEM matches my data. But the approach I wound up using is to generate an “average” track based on all my trips to and from work.

There’s probably a way to do this with a single SQL statement, but I couldn’t figure out how, so I did the point iteration in Python. The first SQL query takes an existing trip and segments the trip into a series of points. I chose a maximum interval of 50 meters because that was approximately how often the GPS reports data at the speeds I’m normally travelling. Here’s the PostGIS SQL:

SELECT ST_AsText(ST_PointN(

segpoints.points,

generate_series(1, ST_NPoints(segpoints.points)))

)

FROM (

SELECT

ST_GeometryN(

ST_Segmentize(track_utm, 50),

generate_series(1, ST_NumGeometries(ST_Segmentize(track_utm, 50)))

)

AS points FROM tracks WHERE tid=67

) AS segpoints;

This yields a series of points (in WKT format) along the track.

My Python script takes each of these points and finds the average X, Y, and Z (elevation) coordinates of all points within 50 meters of the point. Here’s the query:

SELECT count(*), avg(lat), avg(lon), avg(ele)*3.2808399 AS avg_ft FROM points WHERE ST_Within( ST_SetSRID(ST_GeomFromText(POINT_WKT), 32606), ST_Buffer(point_utm, 50) );

And the results:

Elevation and speed, Jul 1 ride to ABR

The red squares show the elevations along my route, and the green markers show the speed I was traveling at that point. In this case, I’m riding to work, so home is on the left and ABR is on the right. The big hill on the left is Miller Hill Road. In general, you can see that a ride to work involves going over Miller Hill, then a gradual climb along Sheep Creek Road to the intersection with Ester Dome Road (the little hitch in this section is the hairpin curve before Anne’s Greenhouses where so many people go off the road in winter…), followed by a steeper descent to ABR. My speed tracks the elevation changes fairly closely. I’ll be interested in seeing how these plots change over time as my legs and cardiovascular system strengthens. Will the green points rise uniformly, or will I be able to see improvements in individual aspects of my performance such as hill climbing?

One final point: the elevation at home and ABR are essentially the same. My conclusion after all this is that elevation can’t account for why my rides home are so much faster (1.28 mph, on average). Wind? Cold mornings? Excitement to get home?

For reference, here's my ride home on the same day:

Elevation and speed, Jul 1 ride from ABR